Core solution

IdentityIQ

IdentityIQ delivers full lifecycle and compliance management for comprehensive identity security.

Business value

SailPoint IdentityIQ is custom-built for complex enterprises

A complete solution leveraging AI and machine learning for seamlessly automating provisioning, access requests, access certification, and separation of duties demands.

Analyst report

2024 Gartner® Market Guide for Identity Governance and Administration

Explore key factors shaping IGA in this Gartner® report. Discover vendor evaluation recommendations, market insights, feature prioritization guidance, and emerging trends to optimize your identity security approach.

Use Cases

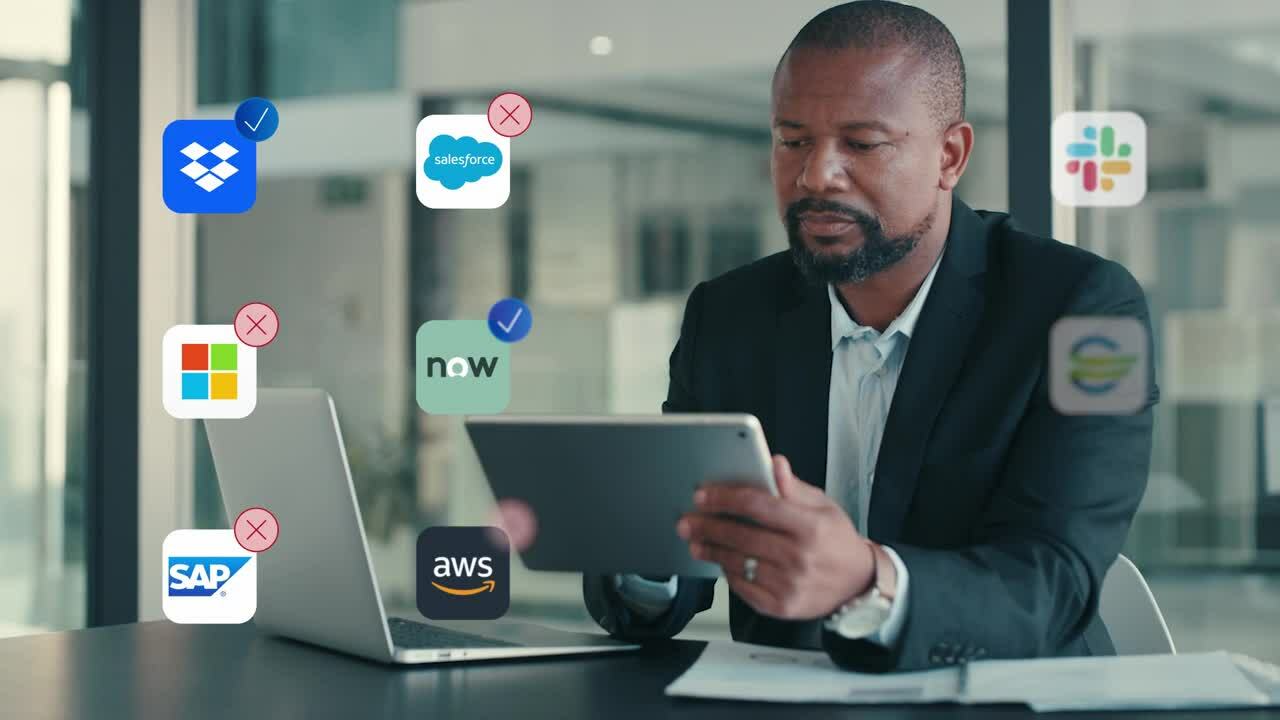

Max productivity. Real-time security.

Bring automation to your Identity Security efforts with the efficiency of SailPoint IdentityIQ. Easily add users and scale to fit the demands of your organization.

Secure your remote workforce

Manage access to applications, resources, and data through streamlined self-service requests and lifecycle event automation. Adjust access automatically based on role changes.

Learn moreAutomate provisioning tasks

Deliver the right access when workers need it while enabling effective management of high volumes of requests and changes.

Learn moreMaintain auditable compliance

Automate audit reporting, access certifications, and policy management. Continuously review user access and refine policies for strong governance.

Learn moreCustomer Story

Delivering comprehensive identity security

Related resources

Explore the benefits of IdentityIQ

Get started

See SailPoint IdentityIQ in action

Let us show you how SailPoint IdentityIQ can govern access to all your essential business applications.